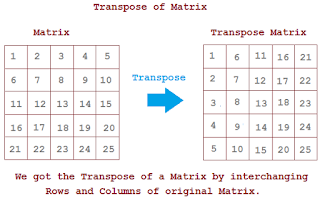

A score is assigned based on the number of selected operations required to reach the correct multiplication result. At each step of TensorGame, the player selects how to combine different entries of the matrices to multiply. We formulate the matrix multiplication algorithm discovery procedure (that is, the tensor decomposition problem) as a single-player game, called TensorGame. We instead use DRL to learn to recognize and generalize over patterns in tensors, and use the learned agent to predict efficient decompositions. These approaches often rely on human-designed heuristics, which are probably suboptimal. Nevertheless, in a longstanding research effort, matrix multiplication algorithms have been discovered by attacking this tensor decomposition problem using human search 2, 15, 16, continuous optimization 17, 18, 19 and combinatorial search 20. In fact, the search space is so large that even the optimal algorithm for multiplying two 3 × 3 matrices is still unknown. In contrast to two-dimensional matrices, for which efficient polynomial-time algorithms computing the rank have existed for over two centuries 13, finding low-rank decompositions of 3D tensors (and beyond) is NP-hard 14 and is also hard in practice. We focus here on practical matrix multiplication algorithms, which correspond to explicit low-rank decompositions of the matrix multiplication tensor. Although an important body of work aims at characterizing the complexity of the asymptotically optimal algorithm 8, 9, 10, 11, 12, this does not yield practical algorithms 5. This space of algorithms contains the standard matrix multiplication algorithm and recursive algorithms such as Strassen’s 2, as well as the (unknown) asymptotically optimal algorithm. This algorithm discovery process is particularly amenable to automation because a rich space of matrix multiplication algorithms can be formalized as low-rank decompositions of a specific three-dimensional (3D) tensor 2, called the matrix multiplication tensor 3, 4, 5, 6, 7. We focus on the fundamental task of matrix multiplication, and use deep reinforcement learning (DRL) to search for provably correct and efficient matrix multiplication algorithms. Our results highlight AlphaTensor’s ability to accelerate the process of algorithmic discovery on a range of problems, and to optimize for different criteria. We further showcase the flexibility of AlphaTensor through different use-cases: algorithms with state-of-the-art complexity for structured matrix multiplication and improved practical efficiency by optimizing matrix multiplication for runtime on specific hardware. Particularly relevant is the case of 4 × 4 matrices in a finite field, where AlphaTensor’s algorithm improves on Strassen’s two-level algorithm for the first time, to our knowledge, since its discovery 50 years ago 2. AlphaTensor discovered algorithms that outperform the state-of-the-art complexity for many matrix sizes. Our agent, AlphaTensor, is trained to play a single-player game where the objective is finding tensor decompositions within a finite factor space. Here we report a deep reinforcement learning approach based on AlphaZero 1 for discovering efficient and provably correct algorithms for the multiplication of arbitrary matrices.

However, automating the algorithm discovery procedure is intricate, as the space of possible algorithms is enormous. The automatic discovery of algorithms using machine learning offers the prospect of reaching beyond human intuition and outperforming the current best human-designed algorithms. Matrix multiplication is one such primitive task, occurring in many systems-from neural networks to scientific computing routines.

Improving the efficiency of algorithms for fundamental computations can have a widespread impact, as it can affect the overall speed of a large amount of computations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed